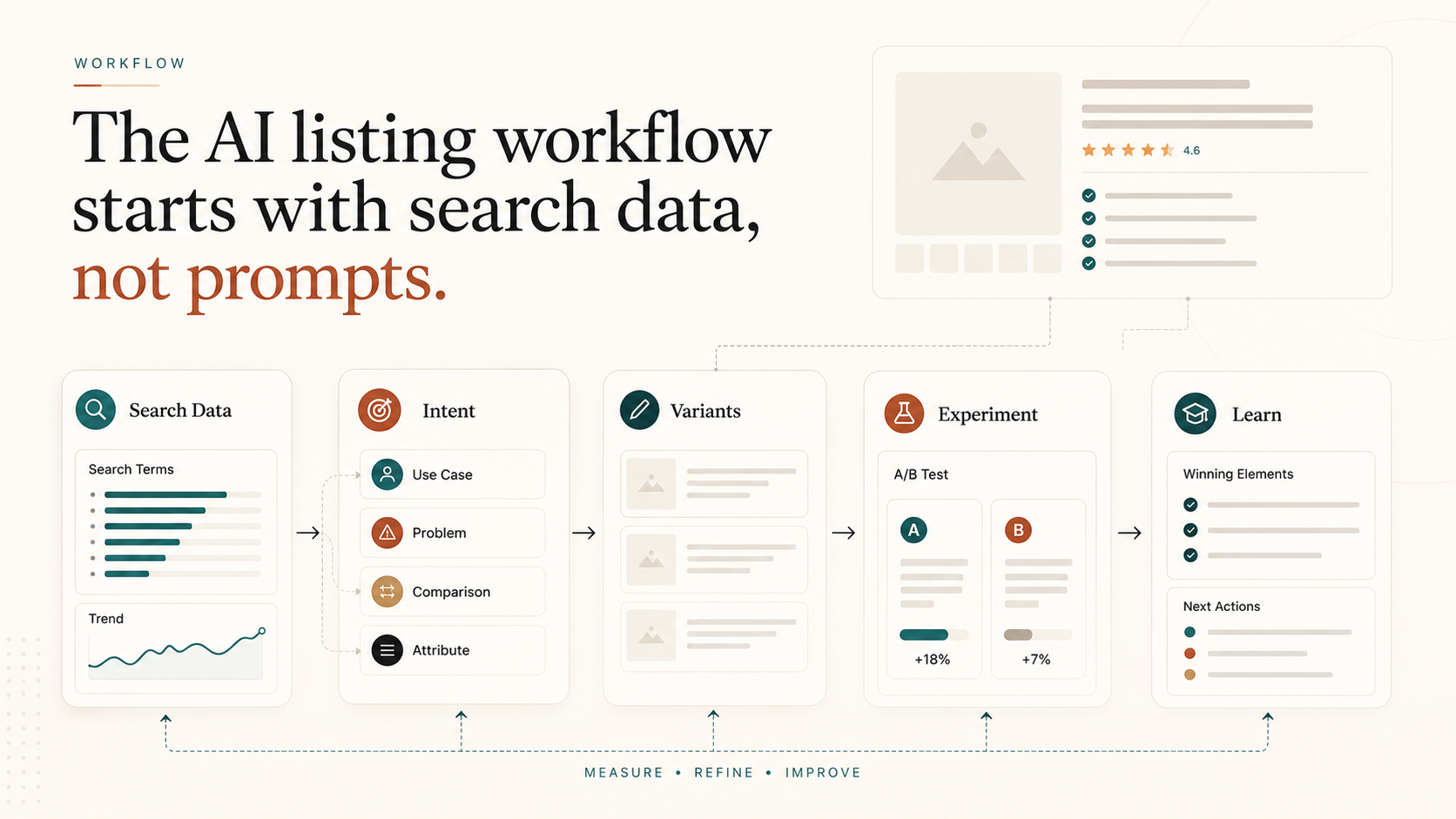

The AI listing workflow starts with search data, not prompts

Most AI listing copy sounds polished but misses buyer intent. The better workflow starts with search data, maps intent, writes controlled variants, and tests the result.

By WAYAMZ Team

The mistake is not using AI to write Amazon listings.

The mistake is starting with the prompt.

A generic prompt can produce copy that sounds clean, confident, and completely disconnected from how buyers actually search. That is why so many AI-written listings read better than the old keyword dump but still fail to lift rank or conversion.

The better workflow starts with marketplace data.

Before asking AI to write anything, the team should know which search terms matter, which buyer objections appear in reviews, which product attributes drive comparison, and where the current listing is leaking clicks or purchases.

Step 1: Pull The Buyer Language

Amazon copy should begin with search behavior.

Use the search term report, Brand Analytics, Search Query Performance, review language, customer questions, and competitor positioning to understand what buyers are trying to solve. The goal is not to collect more keywords. The goal is to understand intent.

One term may signal price sensitivity. Another may signal compatibility. Another may signal giftability, durability, safety, size anxiety, or use-case fit.

AI is much better when it is asked to write around those patterns instead of a flat keyword list.

Step 2: Map Intent Before Writing

Do not give the model a pile of terms and ask for a title.

Cluster the terms first:

- Core category language: what the product is.

- Use-case language: where and why the buyer needs it.

- Objection language: what the buyer is worried about.

- Comparison language: what the shopper is using as a substitute.

- Attribute language: size, material, compatibility, pack count, or specification.

This makes the prompt sharper. It also prevents the listing from becoming a polished paragraph that hides the most important conversion detail.

Step 3: Generate Variants, Not The Final Answer

AI should produce options.

Ask for several title structures, bullet frameworks, and A+ section angles. Each should have a purpose. One version may prioritize clarity. One may prioritize semantic coverage. One may prioritize comparison shoppers. One may be built for mobile scanning.

The operator then chooses what to test, edits for brand truth, and removes anything that overclaims.

This is the point where human judgment matters most. A model can make a benefit sound stronger than the product can support. Amazon shoppers punish that quickly through returns, reviews, and conversion drop-off.

Step 4: Test The Change

If the brand has access to Manage Your Experiments, use it.

Titles, A+ content, and other eligible content tests can show whether the new structure actually improves buyer response. Where formal experiments are not available, use disciplined before-and-after measurement: CTR, conversion rate, unit session percentage, search rank, and SQP movement by query.

Do not declare victory because the copy reads better.

Declare progress when shoppers click more, convert better, or when the right search queries begin moving in the right direction.

Step 5: Feed Results Back Into The Next Version

The strongest AI listing workflow is circular.

Data informs the prompt. AI creates variants. Operators edit. Experiments measure. Results feed the next prompt.

That loop is much more durable than “write me five bullets for this product.”

It also fits the way Amazon search is moving. As AI shopping assistants and semantic matching become more visible, listings need to explain the product completely, not just repeat keywords. Complete does not mean long. It means clear enough for the buyer and the machine to understand the same promise.

Keep The Human Editor In The Loop

The most dangerous AI listing output is the version that sounds plausible.

Operators should check every claim against the actual product, packaging, compliance rules, and customer experience. If a bullet promises a use case the product cannot support, the short-term click lift can turn into returns and negative reviews. If a title overreaches on compatibility, search traffic may improve while conversion quality gets worse.

The human editor’s job is to keep the listing commercially sharp and operationally true. AI can draft faster, but the brand still owns the promise.

The Operator Read

AI can make listing work faster. It cannot decide what the market is saying.

Start with search data. Map intent. Generate controlled variants. Test the result. Keep the parts that shoppers prove they understand.

That is the difference between AI copy and an AI-assisted listing system.